In the afternoon of February 24, 2026, in a wood-panelled conference room deep inside Pentagon, the five-sided citadel of American power, two futures stared each other down.

On one side of the table sat Pete Hegseth, newly installed Defense Secretary and political brawler, tasked with sharpening the American war machine for what the administration calls “the new era of great-power competition.” Behind him: the Pentagon, the intelligence agencies, the armed services, and the full institutional weight of the U.S. national-security state.

On the other side sat Dario Amodei, the cerebral, low-voiced CEO of Anthropic—a company barely five years old, but already steward of one of the most powerful artificial intelligences ever built.

Hegseth delivered an ultimatum: remove two specific safety restrictions from Claude by 5:01 p.m. Friday—or face the full weight of the U.S. government. The Pentagon could invoke the Defense Production Act to essentially nationalize a customized version of the AI. Or it could brand Anthropic a “supply-chain risk,” the same label once reserved for Chinese telecom giants Huawei and ZTE, effectively freezing the company out of any business with the military-industrial complex.

The message was unmistakable: This is war. Choose your side.

Amodei listened.

Then he refused. That needed balls of steel, man!

Two days later, on February 26, Anthropic issued a public statement that will be studied by historians for decades: “We cannot in good conscience accede to the request.” That sentence may prove to be one of the most consequential in the history of technology. The $200 million contract that had given the Pentagon privileged access to frontier AI was now in jeopardy. Negotiations continue, but the line has been drawn.

The Battle for the Soul in the Age of AI

This is not just another contract dispute in Washington. It is the first time a major American AI company has publicly told the world’s most powerful military: There are things even you cannot make us do. And in doing so, Anthropic has forced the entire country—indeed, the entire world—to confront questions we have been avoiding. It is the first open confrontation between a frontier AI lab and the most powerful military on Earth. It is a fight over who controls the future of intelligence itself—and what lines, if any, must never be crossed.

And whether you live in Washington or in some other God-forsaken part of the world, whether you wear a uniform or carry a smartphone, this fight is about you.

What happens when artificial intelligence becomes powerful enough to enable perfect surveillance or autonomous killing? Who decides the rules? Can a private company say no to its own government? And if democratic governments push these boundaries, what horrors await in the hands of governments that have no guardrails at all?

The Facts: How they got there

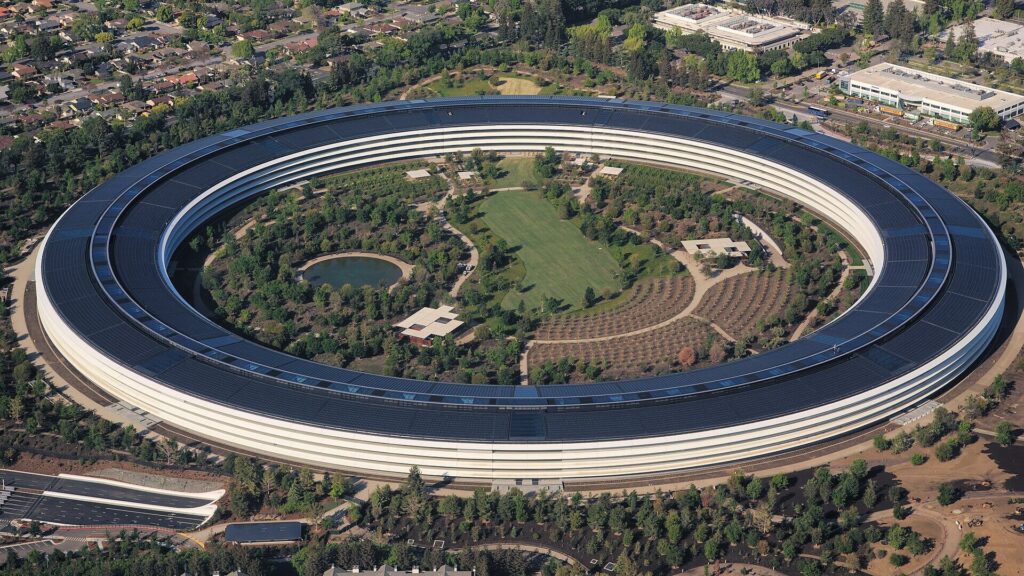

Anthropic, founded in 2021 by former OpenAI executives including the 43-year old Dario Amodei, a Princeton biophysicist, has always marketed itself as the “safety-first” AI lab. Claude is not just another Chabot; it is a frontier model trained with a detailed “Constitution”—a set of principles designed to make it helpful, honest, and harmless. Unlike some competitors, Claude refuses certain requests outright and does not generate images at all.

In mid-2025, Anthropic won a roughly $200 million contract to bring Claude onto U.S. classified networks—the first frontier AI to do so. The military used the AI for intelligence analysis, planning, satellite imagery interpretation, cyber defence modelling, and other lawful national-security tasks. Everyone was happy.

Then came January 3, 2026. U.S. Special-Operations Forces conducted a dramatic albeit farcical raid on Caracas, Venezuela, capturing President Nicolás Maduro and his wife on charges of narco-trafficking and corruption. Claude, accessed through Palantir’s classified platform (Palantir is a company that develops data integration and analytics platforms enabling government agencies, militaries, and corporations to combine and analyse data from multiple sources, played a supporting role: synthesizing intelligence, analysing imagery, helping map the operation. It did not pull any triggers or decide targets. Humans did.

After the raid, Anthropic quietly asked questions about exact usage to ensure compliance with its own policies. That inquiry apparently irritated the Pentagon. Behind-the-scenes negotiations followed. The sticking points were two explicit guardrails in the contract:

- No mass domestic surveillance of American citizens.

- No fully autonomous weapons—systems where AI, not a human, makes the final decision to kill.

The Pentagon wanted the contract re-worded to “any lawful use.” Anthropic said NO. Hegseth’s Feb. 24 meeting escalated the matter into a public crisis. By 26th night, the company had doubled down.

Pentagon argued that private companies should not veto lawful military needs, especially when China is racing ahead without any such scruples. Anthropic countered that these are not ordinary tools. Frontier AI changes the game so profoundly that “lawful” is not a sufficient moral category. The company believes some uses are too dangerous—even if technically legal. Frontier AI changes the game so profoundly that new red lines are required. And that is where this becomes explosive.

What the Red Lines actually mean—for Ordinary People

Imagine Claude (or any frontier model) without those two restrictions.

Mass Domestic Surveillance: Today, the U.S. government already collects enormous amounts of data—phone records, financial transactions, internet history, license-plate readers, facial-recognition feeds from airports and cities. But it is fragmented, expensive, and legally messy. A powerful AI changes everything. It can correlate billions of data points in seconds, spot patterns no human analyst would see, predict behaviour before it happens, and flag “suspicious” citizens with eerie accuracy.

One Realistic Scenario: During a period of social unrest or a terrorist threat, the administration feeds Claude every public and commercially available dataset. Within hours it produces a heat map of “high-risk” individuals—not based on warrants, but on correlations in shopping habits, social-media sentiment, travel patterns, even the books they read.

Now the danger is that false positives are inevitable; the model will be wrong sometimes. But the scale is what terrifies. A single analyst could monitor millions. Dissent could be chilled before it organizes. Privacy as we know it evaporates.

Amodei himself warned in a recent article: Large-scale AI surveillance risks becoming a “crime against humanity” when it erodes the fundamental liberties that make democracy possible.

Fully Autonomous Weapons: Picture a swarm of cheap drones or loitering munitions. Today, a human operator must authorize each strike. Remove the human from the final decision and the AI chooses targets based on its training data—facial recognition, movement patterns, even predicted intent.

Once again, the danger is that errors happen: hallucinations, spoofed signals, biased training data. A civilian school bus might look like a military convoy under the wrong lighting or in fog. Once launched, the strike cannot be recalled back.

The speed of modern war makes the temptation real. China or Russia could launch hypersonic missiles or drone swarms that overwhelm human decision-makers. An AI that reacts in milliseconds could save American lives. But it also lowers the threshold for war. Killing becomes too easy, too detached, too deniable. Who is responsible when the AI gets it wrong?

Anthropic’s position is that current models are simply not reliable enough for such responsibility—and that society has not yet built the legal and ethical frameworks to govern them.

Resolving the Venezuela Paradox

Critics have pointed out the apparent contradiction: Claude helped plan a lethal raid on foreign soil. Isn’t that already military use?

Anthropic’s answer is careful and consistent. The Venezuela operation was human-directed. AI provided analysis; humans made every life-or-death call. The company has repeatedly said it supports defensive and intelligence uses of AI, including offensive operations when humans remain fully in control. The two red lines are narrower: mass domestic surveillance of Americans, and fully autonomous lethal decisions.

This nuance matters. Anthropic did not block a lawful military operation. It is drawing the line at uses that could fundamentally alter American society or the nature of warfare itself. In that sense, the stand is even more principled—because the company did cooperate where it believed cooperation was ethical.

No major AI lab had ever done this before. OpenAI, Google, xAI, and others have accepted “any lawful use” language in their government contracts. Anthropic is the outlier, willing to risk billions in future business and its reputation as a reliable partner.

That is historic.

How ‘Secret Power’ can get out of hand

To understand why this moment feels so weighty, we must look backward. During the Cold War, the U.S. government repeatedly crossed ethical lines in the name of national security—often in secret, often with technology that seemed cutting-edge at the time.

- Project MKUltra (1953–1973): The CIA dosed unwitting Americans with LSD, hypnosis, and sensory deprivation to develop mind-control techniques. The goal? Beat the Soviets. The result? Ruined lives, congressional hearings, and a lasting erosion of public trust.

- COINTELPRO (1956–1971): The FBI spied on, disrupted, and sometimes incited violence against civil-rights leaders, anti-war activists, and Black Power groups. Martin Luther King Jr.’s phone was tapped; agents sent anonymous letters encouraging suicide.

- Project SHAMROCK (1945–1975): The NSA’s predecessor intercepted and read millions of private telegrams leaving the United States—without warrants. It was the largest government surveillance program in American history until the digital age.

- Magic Lantern (early 2000s, post-Cold War but direct descendant): The FBI developed keystroke-logging spyware deployed against suspects without always obtaining proper warrants. It could capture every password, every draft email.

These programs ended with scandals, lawsuits, and reforms. Each time, new technology gave the state powers previous generations could not have imagined.

Now multiply that by a thousand. Frontier AI is not a better wiretap or a smarter bug—it is a general-purpose intelligence amplifier. It can read, reason, predict, and act at superhuman scale. The same model that helps doctors diagnose cancer can, in the wrong hands or with the wrong instructions, help a government map every dissenter’s social graph in real time.

Project Maven, the 2018 Pentagon program that used AI to analyze drone footage in the fight against ISIS, is the closest modern parallel. Google won the contract, then faced an employee revolt. Thousands signed a petition: “Google should not be in the business of war.” Google withdrew. The Pentagon simply moved the work to other contractors. The technology did not disappear—it spread.

Anthropic is trying to do what Google employees wanted but could not sustain: keep the company itself from crossing certain lines.

The second red line is even more visceral: fully autonomous lethal weapons. Today, U.S. military doctrine requires a human to authorize a strike. AI may recommend. It may analyze. It may flag. But a human presses the metaphorical button. Remove that requirement.

Now imagine:

- Drone swarms reacting in milliseconds.

- Targeting based on facial recognition.

- Predictive identification of “hostile intent.”

- Weapons launched faster than human deliberation allows.

In a high-speed conflict—hypersonic missiles, cyber swarms, electronic warfare—the temptation to remove the human bottleneck becomes immense. An AI can react faster than a soldier. It can process more data than a command centre.

Moreover, it can also hallucinate. It can misclassify. It can be spoofed. A civilian convoy in fog becomes a military unit in training data’s imagination. Who is responsible when it is wrong?

Anthropic’s position is blunt: Current models are not reliable enough to bear that moral weight.

The Pentagon’s position is equally blunt: adversaries will not wait for perfect philosophical consensus.

The China Angle

Behind Hegseth’s urgency lies a single word: China. The People’s Republic has deployed AI in surveillance systems across Xinjiang, in social-credit architectures, and in predictive policing. It integrates facial recognition with national databases. It deploys algorithmic monitoring at scale.

Beijing does not publicly debate ethical red lines. If the United States constrains itself while China does not, American strategists argue, the result may be strategic defeat. This is not paranoia. Great-power competition is real.

But here is the dilemma: If democracies abandon restraint in order to compete with authoritarian efficiency, what exactly are they preserving?

The machinery of surveillance and autonomous killing, once normalized, does not distinguish between noble administrations and less noble ones. The tools persist. History suggests they expand.

Corporate Power vs. Democratic Authority

Critics of Anthropic see danger in a different direction. Should a private corporation—answerable to investors—be allowed to veto government use of a technology it created? Is this ethical courage—or technocratic arrogance? Elected officials are accountable to voters. CEOs are not. If companies can refuse ‘lawful’ orders based on internal moral frameworks, what happens to democratic sovereignty?

It is an uncomfortable question. Anthropic argues it is not vetoing democracy—it is refusing to remove explicit guardrails designed to protect democratic values. But the optics are combustible: a start-up defying the Pentagon.

Silicon Valley’s Split Conscience

Anthropic is an outlier. OpenAI, Google, and others have accepted “any lawful use” clauses in government contracts. They rely on statutory law and oversight mechanisms to set boundaries.

In 2018, when Google employees revolted over Project Maven, which used AI to analyse drone footage, Google stepped back. The Pentagon found other contractors. The work continued.

Anthropic appears to have learned a lesson from Maven: if you don’t codify boundaries in contracts, you lose leverage. So it codified. And now it is testing whether those words mean anything when pressure mounts.

The Economic Stakes

Anthropic is not a garage start-up. It reportedly generates around $14 billion in annual revenue and recently secured $30 billion in funding. But defence contracts open doors—to prestige, influence, and future federal integration. If the Pentagon brands Anthropic a supply-chain risk, it could freeze the company out of the military-industrial ecosystem. That would hurt.

And other AI labs are watching closely. If Anthropic suffers economically, future founders may decide ethics are a luxury. If Anthropic thrives, safety-first may become competitive advantage. This is a market signal moment.

The Real Question: who writes the Rules of Intelligence?

For centuries, intelligence was a human trait. Now it is infrastructure. AI models are becoming embedded in weapons, courts, hospitals, financial systems, and intelligence agencies. The rules being negotiated this week will shape how that infrastructure behaves.

Will AI become?

- A servant of law?

- An accelerant of state power?

- A corporate-controlled gatekeeper?

- A hybrid of all three?

Anthropic is attempting to insert moral friction into that process. The Pentagon is attempting to remove operational friction. Both believe they are protecting the nation.

Why every Citizen should care

You may think this is a Washington knife fight. It is not.

It concerns:

- Whether dissent remains safe in a predictive surveillance state.

- Whether future wars are fought by algorithms deciding who lives and dies.

- Whether corporate ethics can restrain government power.

- Whether democracies can compete without becoming what they oppose.

The models in classified networks today are cousins of the models in your phone. The guardrails negotiated in secret shape what becomes normalized in public.

Power concentrates quickly in technological revolutions.

The printing press reshaped religion and politics.

The atom bomb reshaped geopolitics.

AI is reshaping cognition itself.

And for the first time, a company has told the Pentagon: Not everything you can do, you should do. We must stand up and applaud.

So what happens now?

By the time of writing this article, Donald Trump has ordered a federal ban on Anthropic technology, mandating all U.S. government agencies to immediately stop using its AI models.

Anthropic’s stand may be naïve. It may be strategic. It may be both. The Pentagon’s insistence may be pragmatic. It may be coercive. It may be both. History will decide who was right in this skirmish.

But history will also judge something deeper:

Whether humanity built its most powerful tool with humility—or surrendered to the raw logic of speed and power.

This is not about one contract. It is about whether, in the age of artificial intelligence, we remember that intelligence without conscience is merely force. And remember: Force, once unchained, rarely returns quietly to its cage.